Content Factory

A code-first video pipeline — idea → SRT → storyboard → animated scenes → MP4 / PDF, one command.

- Role

- Solo build

- Period

- 2025 – present

- typescript

- react

- remotion

- ffmpeg

- pipelines

- noir

A code-first video pipeline — idea → SRT → storyboard → animated scenes → MP4 / PDF, one command.

Files & links

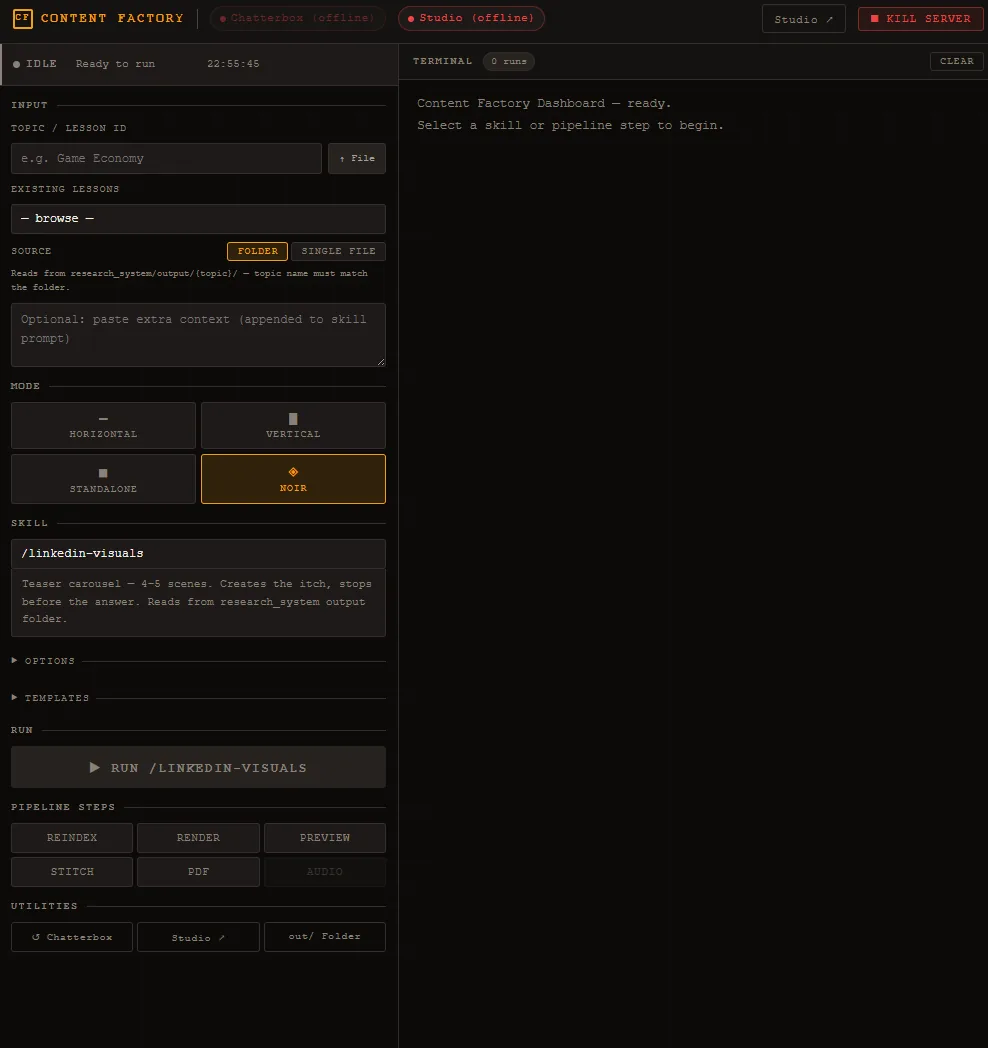

A TypeScript + React (Remotion) video factory that compiles idea → SRT → storyboard → animated scenes → stitched MP4 / PDF carousel as a single command, and exposes the whole pipeline through a local web dashboard so I can ship LinkedIn posts and course lessons in minutes instead of hours.

In daily use. Drives weekly LinkedIn output and a course-in-progress. 50+ shipped pipeline phases to date, each scoped and reversible.

Short-form video is now the highest-leverage distribution channel for technical work — but the production loop (record → edit → caption → mix → cut variants) is the actual cost. Every minute of finished video on a normal NLE timeline costs 30–60 minutes of clicking. That cost compounds: one idea, four output formats, four edits.

The bet: video should be a build artifact. If the script and the visual structure are data, four format variants is a --mode flag, not four edits. And if the system is a programmable pipeline, an LLM can drive it.

A single recording on my phone can become:

— from the same runtime configuration, by changing one flag.

The pipeline is also driven from a local browser dashboard: pick a mode, upload an SRT + audio, watch the storyboard plan render scene-by-scene, edit any scene’s text inline, re-emit — all without touching the terminal.

Every scene is a typed React component (TitleCard, BulletList, PullQuote, StatCallout, BigStatement, CTASlide, plus a parallel Noir theme — corkboard backdrop, manila paper, red stamps, typewriter fonts). A “video” is just a RuntimeLessonAnimation[] — a JSON-shaped description of what plays when. Remotion handles rendering; the source of truth is structured data, so the same lesson reflows correctly across 1920×1080, 1080×1920 split-facecam, 1080×1920 standalone, and 1080×1350 PDF — driven by a --mode flag, not a re-edit.

The hardest part of this kind of system is going from “I just talked into my phone” to “here’s a structured visual plan.” Two paths solve it:

storyboard-from-srt.ts) detects silence gaps, classifies each segment against six visual templates with a confidence-ranked rule set, and emits a runtime config + per-scene WAV slices + caption metadata. Free, fast, deterministic./storyboard Claude Code skill does the same job but with reasoning: it cleans SRT mis-hears against an optional post.md ground truth, re-segments on idea boundaries instead of silence, synthesises Mayer-compliant on-screen text — distilled, not mirrored from the narration. Enforces a CTA as the final scene by construction.Both paths converge on the same downstream artifacts so the rest of the pipeline is identical.

A long-standing pain in video tooling: “the visuals drift from the voice.” Solved here by making the master WAV authoritative — the beat-emitter auto-pads scene durations to close inter-scene silence gaps, freeze-extends the final frame to match voiceover length, and slices captions per scene from the SRT by time-overlap (not by trusting upstream caption blocks).

Drop a video.mp4 in the input folder and the system: extracts its audio (overwriting any existing voiceover — video wins), uses it as the master track, and overlays the face-cam as a 480×270 picture-in-picture at one of five anchor positions, with an alpha fade-out on the tail if the face-cam is shorter than the final video. No re-render of the underlying visuals — pure FFmpeg overlay at stitch time.

dashboard/server.ts (~700 lines, Express, loopback-only with a per-boot token) drives the whole thing through a browser:

/storyboard skill needs a human “go” before committing, so the dashboard mints a UUID, passes --session-id on turn 1 and --resume on every follow-up turn, preserving Claude’s in-memory context across the approval pauseEvery pipeline run writes phase sidecars (_phase-build.json, _phase-render.json, _phase-stitch.json…). A reflection script aggregates them into SELF-IMPROVEMENT.md — a cumulative log of error counters, per-scene sync stats, bundle and render times. The next run reads its own history, so regressions surface as data, not vibes.

initial_prompt to fix proper-noun mis-hears/storyboard skill is the primary path; the heuristic CLI does the same job for free in CI. Both produce the same artifacts, so the rest of the system doesn’t care which created them.In daily use. Currently driving LinkedIn output and a course-in-progress. Roadmap (IMPROVEMENT-PLAN.md) is versioned phase-by-phase — 50+ shipped phases, each scoped, justified, reversible.